One typical view posits that multisensory integration occurs at later stages of cortical processing, subsequent to unisensory analysis. The relevant neuronal mechanisms have been widely investigated. There is a compelling benefit to multimodal information: behavioral studies show that combining information across sensory domains enhances unimodal detection ability-and can even induce new, integrated percepts –. We do not experience the world as parallel sensory streams rather, the information extracted from different modalities fuses to form a seamlessly unified multi-sensory percept dynamically evolving over time. The funders had no role in study design, data collection and analysis, decision to publish, or preparation of the manuscript.Ĭompeting interests: The authors have declared that no competing interests exist. This is an open-access article distributed under the terms of the Creative Commons Attribution License, which permits unrestricted use, distribution, and reproduction in any medium, provided the original author and source are credited.įunding: This work was supported by National Institutes of Health 2R01DC05660 to DP as well as a 973 grant from the National Strategic Basic Research program of the Ministry of Science and Technology of China (2005CB522800), National Nature Science Foundation of China grants (30621004, 90820307), and Chinese Academy of Sciences grants (KSCX2-YW-R-122, KSCX2-YW-R-259).

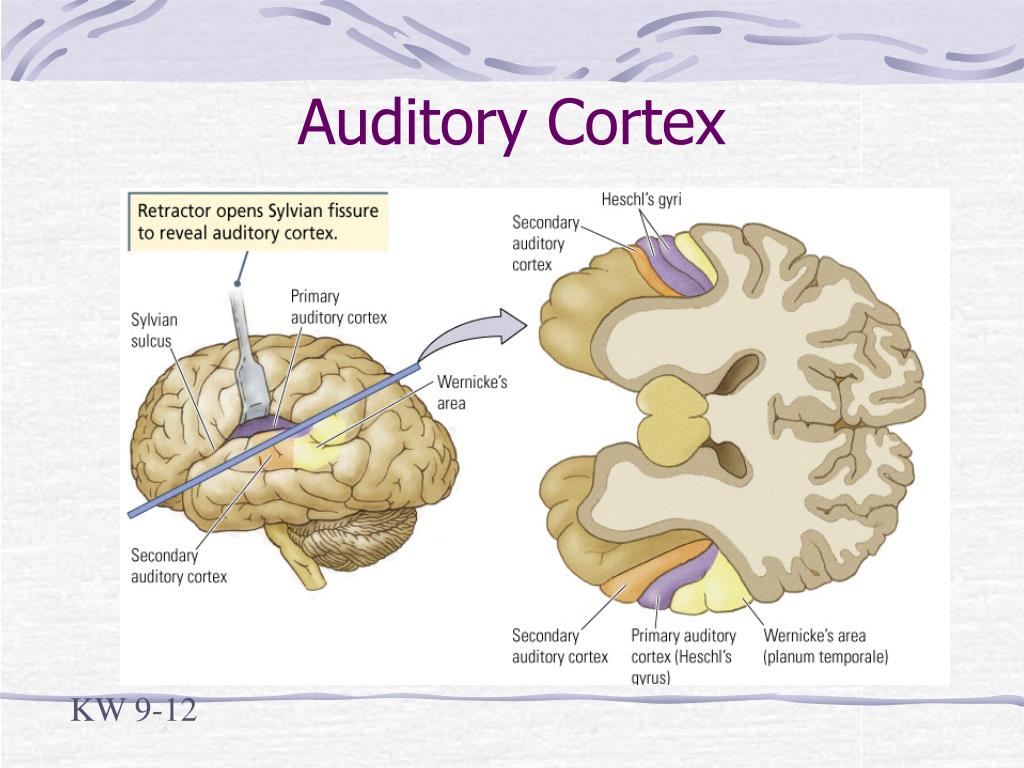

Received: ApAccepted: JPublished: August 10, 2010Ĭopyright: © 2010 Luo et al. PLoS Biol 8(8):Īcademic Editor: Robert Zatorre, McGill University, Canada Our work therefore contributes to the understanding of how multi-sensory information is analyzed and represented in the human brain.Ĭitation: Luo H, Liu Z, Poeppel D (2010) Auditory Cortex Tracks Both Auditory and Visual Stimulus Dynamics Using Low-Frequency Neuronal Phase Modulation. Continuous cross-modal phase modulation may permit the internal construction of behaviorally relevant stimuli. The critical finding is that cross-modal phase modulation appears to lie at the basis of this integrative processing. Similarly, visual cortex mainly follows the visual properties of a stimulus, but also shows sensitivity to the auditory aspects of a scene. Remarkably, auditory cortex not only tracks auditory stimulus dynamics but also reflects dynamic aspects of the visual signal. We developed a phase coherence analysis technique that captures-in single trials of watching a movie-how the phase of cortical responses is tightly coupled to key aspects of stimulus dynamics. In this study, we recorded magnetoencephalography (MEG) data from participants viewing audiovisual movie clips. Neurophysiological and human imaging studies are increasingly exploring the response properties elicited by natural scenes. Unfortunately, we currently have little information about the neuronal mechanisms for this cross-modal processing during online sensory perception under natural conditions. When faced with ecologically relevant stimuli in natural scenes, our brains need to coordinate information from multiple sensory systems in order to create accurate internal representations of the outside world. These experiments are the first to show in humans that a particular cortical mechanism, delta-theta phase modulation across early sensory areas, plays an important “ active” role in continuously tracking naturalistic audio-visual streams, carrying dynamic multi-sensory information, and reflecting cross-sensory interaction in real time. In particular, the phase of the 2–7 Hz delta and theta band responses carries robust (in single trials) and usable information (for parsing the temporal structure) about stimulus dynamics in both sensory modalities concurrently. We provide evidence, based on magnetoencephalography (MEG) recordings from participants viewing audiovisual movies, that low-frequency neuronal information lies at the basis of the synergistic coordination of information across auditory and visual streams. One provocative hypothesis deriving from neurophysiology suggests that there exists early and direct cross-modal phase modulation.

Integrating information across sensory domains to construct a unified representation of multi-sensory signals is a fundamental characteristic of perception in ecological contexts.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed